From OLTP Systems to Lakehouses – A Practical Approach

In a world where data is not only an asset but also a liability if mismanaged, Data Monitoring has emerged as a critical discipline. Whether you’re managing operational databases, enterprise Data Warehouses, or modern Lakehouse platforms, being able to observe, measure, and track the state of your data is essential for maintaining trust, performance, and compliance.

This article explores the principles of effective Data Monitoring — and how tools like HEDDA.IO can help implement them at scale across diverse platforms like Microsoft SQL Server, Databricks, and Microsoft Fabric Lakehouse.

What Is Data Monitoring?

Data Monitoring refers to the continuous observation of data health, structure, and quality over time. Unlike point-in-time data validation (which answers “Is this data correct right now?”), data monitoring helps answer broader, ongoing questions:

- Is the data changing as expected over time?

- Are key metrics or dimensions missing, drifting, or inconsistent?

- Are data types and schema definitions aligned with expectations?

- Are critical Business Rules being violated more frequently?

- Are new tables and columns being added without validation coverage?

In essence, Data Monitoring combines technical observability (schema, types, structure) with business logic awareness (Rule compliance, KPI thresholds).

The Foundation: Structured Knowledge of Your Data

To monitor data effectively, you first need structured knowledge about your data sources:

- What tables and views exist?

- What are the data types, constraints, and relationships?

- What is the intended purpose or domain of each column?

Without this metadata baseline, monitoring quickly turns into guesswork.

That’s why a key starting point in any monitoring initiative is to systematically capture and model your data structure — not just the values.

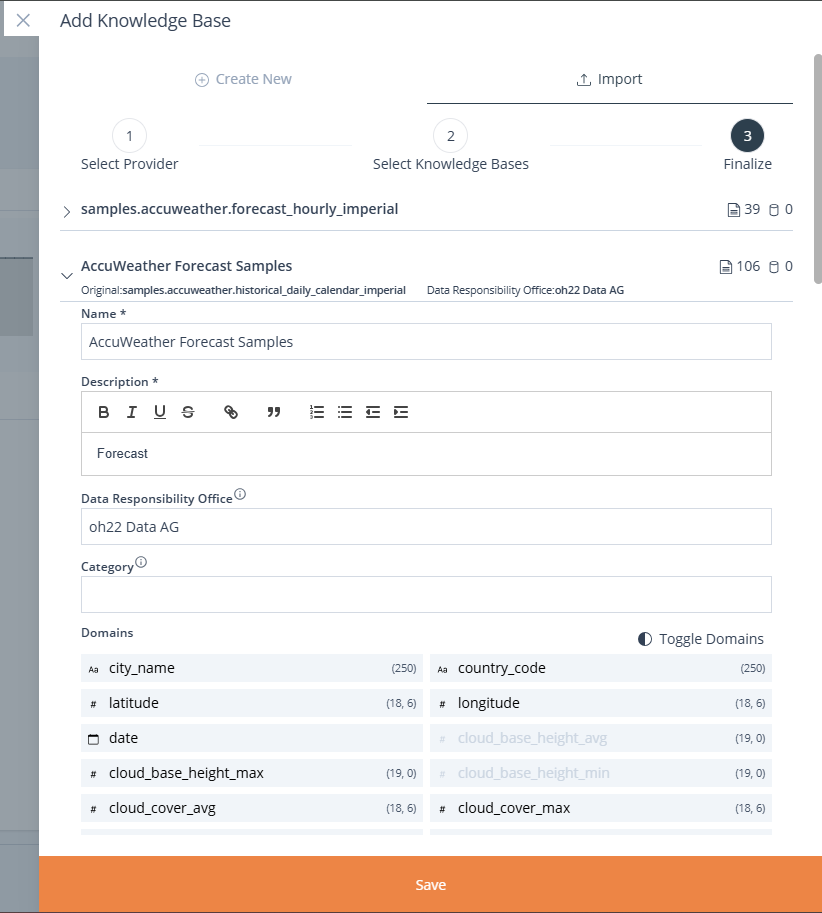

Fast Metadata Acquisition with HEDDA.IO

This is where HEDDA.IO comes in as a highly efficient enabler.

HEDDA.IO allows users to generate complete Knowledge Bases by scanning and importing full schema definitions from a wide range of supported systems, including:

- Microsoft SQL Server

- Azure SQL Database

- Databricks

- Microsoft Fabric Lakehouse

Once connected, HEDDA.IO automatically retrieves:

- Table structures

- Column data types

- Optional: descriptions or extended properties from catalogue systems

Automated Domain and Type Mapping

After schema import, HEDDA.IO analyses each column and automatically assigns a Domain.

This step, which would otherwise be manual and error-prone, is fully automated — saving hours of effort and ensuring consistency.

With this foundation in place, users can immediately begin defining Rulebooks, which are collections of validation Rules tied to specific tables, columns, or domain combinations.

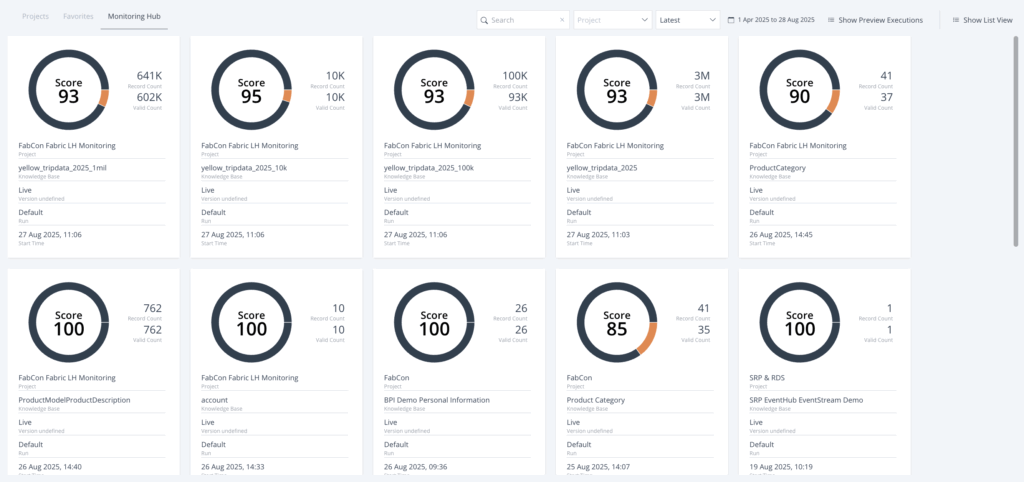

Monitoring as a Lifecycle, Not a Snapshot

The key to Data Monitoring is that it is ongoing, not just a one-time validation. Once Knowledge Bases and Rulebooks are in place, users can:

- Schedule validations at regular intervals (e.g., hourly, daily)

- Track changes over time using versioned knowledge models

- Aggregate validation results across projects or domains

- Detect anomalies such as unexpected null rates, category explosion, or value range shifts

This applies across data environments — whether it’s a normalized OLTP system or a modern Data Lakehouse with semi-structured or append-only data.

Rapid Setup Enables Iterative Monitoring

Thanks to the automated onboarding process, HEDDA.IO allows teams to:

- Connect to a data source.

- Import schema definitions in minutes.

- Review automatically generated column mappings and domains.

- Begin defining business logic using Rulebooks.

- Execute validations — and monitor continuously.

This short time-to-monitoring enables even lean teams to deploy enterprise-grade data monitoring solutions across all their environments.

Summary

Data Monitoring is no longer optional — it’s a foundational requirement for any data-driven business. To do it right, you need:

- A structured understanding of your data sources

- The ability to define and apply Business Rules

- Automation to support continuous validation

- Aggregated, multi-level visibility into data health

- Compatibility with modern platforms like Databricks and Microsoft Fabric

HEDDA.IO offers the infrastructure to enable this — but the goal remains clear:

Maintain complete visibility and control over your data, every day.