RELEASE NOTES.

Breaking Changes

API Server

We have removed the API Server from the Server Landscape. All tasks previously handled by the API Server are now part of the Frontend Server, which is now referred to as the HEDDA.IO Server. This change helps reduce operational and maintenance costs while eliminating the need to duplicate logic.

Note: This requires the PyRunner and DotNetRunner to be setup with the Frontend URL.

# Prior:

hedda = Hedda.create(base_url=api_url,...)

# Now:

hedda = Hedda.create(base_url=frontend_url,...)

Container App Job / WebRunner

We have redesigned the Container App Job to function as a Full Web Service rather than just a Worker Process. This architectural change brings several advantages. With a dedicated server now handling Validation executions, the HEDDA.IO Server (formerly the Frontend) can also utilize it for Preview functionality. This reduces infrastructure costs, as only one high-performance Server is needed for validation tasks instead of two. Additionally, since the service is always running, the previous startup delay of around 15 seconds for external executions has been eliminated. Another key benefit is the ability to scale executions horizontally, enabling better performance under high workloads with frequent parallel executions.

Edit Version Executions

Previously, it was possible to start a validation against the current Edit Version — this remains possible. However, with the introduction of named Edit Versions, the call for this Execution changed slightly.

DotNetRunner

// Prior: var hedda = Hedda.Create(...,workflowConfig: new(){ ExecuteAgainstDev= true }) // Now: hedda.UseKnowledgeBase(kbName, versionName)

PyRunner

# Prior: hedda.use_knowledge_base(kb_name, use_current=False) # Now: hedda.use_knowledge_base(kb_name, version_name=version_name)

New Features

Git Integration

You can now store and manage your Knowledge Base content directly in a Git repository! This new feature allows you to:

- Version Control: Track changes to your Knowledge Base and easily revert to previous versions.

- Edit Versioning: Multiple Users can now work on the same Knowledge Base at the same time, each on their own version (branch) without impacting each other.

- Multi Environments: You can now configure different Git branches to serve as your Knowledge Bases for different environments (e.g., Development, Staging, Production). This allows for safe testing and deployment of updates before they go live to your Users.

- Automation: Integrate your Knowledge Bases into your CI/CD pipelines for automated updates and deployments.

Improvements

Single Row Processing

- Bring Your Own ExecutionId: The

ExecutionStartcall can now also have theX-Execution-IdHeader which will be used to create the Execution.Keep in mind that this should no collide with existing Executions. - If the ExecutionStart Option

DisableRowStorageis not set explicitly to true the processed rows will be gathered and are then also available in the Review functionality, later.

Note: The SPR and the Preview functionality are now sharing the same Instance.

Keep this in mind when determining the necessary scale of the WebRunner Service.

The DotNetRunner was also extended and can be run directly in Single Row Processing mode enabling it to instantiate once and validate Data as they come in.

var hedda = hedda.Create(frontendUrl, apiKey) .UseProject(projectName) .UseKnowledgeBase(kbName) .UseRun(runName) .StartSingle(); var rowResult1 = hedda.ProcessRow([col1, col2, col3]); var rowResult2 = hedda.ProcessRow([col1, col2, col3]);

var heddaResult = hedda.Finish();

/api/processRow/verbose was added which changes the Response slightly to include the Names of the Columns-, Rulebooks- and BusinessRule Results. Instead of the values in an Array the Values are now in an Object with the Keys being the Name of the entity.{

"RowIndex": 1,

"Valid": true,

"Error": null,

"ColumnResults": {

"Sales Order ID": {

"Value": "1ed99882-9d6e-4b5c-b6e2-50b00c987573",

"OriginalValue": "1ed99882-9d6e-4b5c-b6e2-50b00c987573",

"PhoneticValue": null,

"MemberResult": null

},

"Customer Name": {

"Value": "Travis Ryan",

"OriginalValue": "Travis Ryan",

"PhoneticValue": null,

"MemberResult": null

}

},

"RulebookResults": {

"Product validation": true,

"Sales Data Check": false

},

"BusinessRuleResults": {

"DataType Validation": true,

"Member Validation": true

}

}Search

- Enhanced search logic for more accurate results.

- Improved user interface for streamlined searching.

- Increased search scope with inclusion of definitions from Git repositories.

PyRunner

The PyRunner was updated and the pure Spark Executor was removed the new default executor is the Interop Executor which allows for better feature parity with the DotNetRunner as it is basically utilized under the hood. The Execution performance could be improved vastly with this approach. The external executor is still available and has also received some updates like showing the current execution state and improved error handling.

Some improvements in the interop executor were made in the IPC layer, Utilizing Apache Arrow as transmission format is reducing the communication time by ~20% in the current Spark version 3.5. Coming with Spark 4.0 (currently in Preview) this will get an impressive ~80% boost out of the box. To activate this feature, the Config Option USE_PYARROW_IPC needs to be set to True.

There is only one caveat that PyArrow is not available in every Spark Environment. So far we have identified that it is available in Spark native Environments but not in Spark Connect Environments like Databricks Serverless and Databricks Shared Compute. Databricks Personal Compute and Azure Fabric are supported.

| Type | time |

|---|---|

| PyArrow Off | 120sec |

| PyArrow On (Spark 3.5) | 86sec |

| PyArrow On (Spark 4.0) | 13sec |

Miscellaneous

- Improved the performance of the Audit queries.

- Expanded Domains used in Business Rules to also check Preparations and Actions.

- During import of Knowledge Bases, a connection is tested before it is saved or data are loaded.

- Improved Environments forms in Excel-Addin and added a button that opens the respective HEDDA.IO Environment.

- Alphabetically ordered Member Search Algorithms, Business Rule Actions and Conditions.

- Added possibility to order Projects by Name, Owner or Creation Date on the Project Overview Page.

- Monitored Run Executions can now be filtered to only show the most recent or last Run Execution.

- Monitored Run Executions can now be shown as aggregated statistic tiles.

- Add/Edit Connection forms now show the currently selected file.

- Added Member Validation and Data Type Validation Filter for Business Rule Filter Options in Preview Page.

- PyRunner External Executions are no longer polling for the Result File in the Data Lake but actually the new WebRunner Service, leading to no more infinite waiting if Execution failed fatally.

- It is now possible to create Connections for Data Links while importing a Knowledge Base.

- HEDDA.IO + Excel Add-In User Manuals are now available for download on the HELP page under Documentation.

- Improved memory footprint in WebRunner and PreviewRunner.

Bugfixes

- Removed Variable Domains from Default Run Mapping.

- Fixed a pagination bug in Audit Portal in which the User could set a page lower than 1.

- Fixed a bug where the Favorite Button would delete the wrong Favorite.

- Fixed a bug where deleting an object would delete all Favorites.

- Cell colorization for boolean values in the Excel-Add-In is now working correctly, independent from the Users language settings.

- Prevented saving synonyms with empty values in the Member Drawer.

- Knowledge Base Import from Provider forms now reset on selecting another provider.

- Prevented self-looping Business Rules in Advanced Flow Editor.

- Some UI text overflow improvements.

- Fixed an error that occurred on save, when replacing the Root Business Rule in Advanced Flow Editor.

- Fixed validity icons in Rulebook Flow Display for not executed Rulebooks/Business Rules.

- Disallowed selection of a folder, where a file should be selected in Add/Edit Connection forms.

- Selecting data from a Range that intersects a table in Excel-Addin, now correctly uses selected data instead of the whole table.

- Fixed the Query for items with Data Type Validation Issues in Preview.

- Fixed an issue when validating Data Time columns in Single Row Processing.

New Features

Excel Add-in Validation

The Excel Add-in was extended so that it is now possible to execute a Validation directly from data currently present in an Excel sheet.

Import Knowledge Bases using External Connections

It is now possible to use an existing External Connection to import a Knowledge Base. After selecting the provider on the Import Knowledge Base page, a Tab ‘Use Existing’ can be opened if a matching connection was found. Click on the shown connection to continue the Import process.

Save External Connection during Knowledge Base Import

In the first step of the Import Knowledge Base process, it is now possible to click on Save Connection to change the form to add an External Connection. On clicking Next, the External Connection is persisted and the Import process continues as per usual.

Advanced Flow Editor

A new Flow Editor was added to allow for easier restructuring of the Flow without immediate invalidity hints. In the Advanced Flow Editor it is possible to alter the connections between the Business Rules freely even if it results in Cycles or Orphans. The changes will not be saved directly but collected and only persisted after clicking on the Save Button.

Following Options are available:

- Validate: Validates the current flow and displays error hints on Nodes.

- Format: Arranges the Nodes with the Network Simplex Algorithm .

- Discard: Resets the Flow to the currently persisted State.

- Cancel: Returns to the Regular Flow Display without persisting changes.

- Save: Verifies the validity and persists the changes.

- Remove Business Rule: The Trash icon on the Nodes will remove the Business Rule.

- Remove Connection: The X icon on the connection will remove this specific Connection.

- Add Connection: Drag and Drop a line from the Valid/Invalid handle of one Node to the target handle of another Node.

- Add Business Rule: Drag and Drop a Connection from the Valid/Invalid handle onto an empty space. Alternatively

CTRL+Clickon an empty space in the canvas. - Rename a Business Rule: Double click on the label of a Node and enter the new name. Pressing Enter or leaving the Node will end renaming.

- Mark Multiple Business Rules: Hold down

SHIFTand draw an rectangle over the nodes to be marked. Alternatively, holdCTRLwhile clicking on a Business Rule. - Copy & Paste: Marked Business Rules can be copied and pasted between Rulebooks of the same Knowledge Base.

Welcome Page Improvements

Following up the list view released last time, the Welcome Page has received more improvements.

Favorites

HEDDA.IO now supports marking Projects, Knowledge Bases and their content as favorite. The favorite icon, a star, can be found in the top right of the Details areas where a favorite can be set or removed.

The Welcome Page also now has a tab for Favorites next to Projects. There you can see your Favorites, edit and remove them or open them by clicking of the Favorite. The Favorite can be viewed as cards or as list. This can be switched with the “Show View” icon on the right of the tabs.

More information can be found in the User Documentation.

Monitoring Hub

The Monitoring Hub offers a quick and easy way to see if recent Executions have finished successfully.

On the details page of a Run, the Run can be added to Monitoring by clicking on the “Add to Monitoring” tile under Statistics.

Run Executions of these Runs are then shown under the tab Monitoring Hub on the Welcome Page. By default, the Executions of the last seven days are shown without Preview Executions. By clicking on an Execution, the respective Execution is opened.

Filter Functionality on the Home Page

A filter functionality has been added to the Home Page. With this, it is possible to filter your Projects, Favorites and the Monitoring Hub.

Projects, Favorites and Monitored Run Executions can be filtered by their name using the Search field. Favorites can be filtered by type using a dropdown. Monitored Run Executions can also be filtered by date and Executions started using Preview can be filtered as well. Previews are hidden by default.

In addition, Projects can be filtered by your User’s permission:

- Only Manage: Show all Projects where you have Manage permissions.

- Only Write: Show all Projects where you have Write permissions.

- Only Read: Show all Projects where you have Read permissions.

- Owner: Only show Projects where you are the Project Owner.

- Involved: For Superadmins only. Shows all Project where you are either Owner or User.

Audit Portal

The Audit Portal lets users see all changes made to the Knowledge Base since it was created. It shows what was modified, what type of component it is (like Domains, Rulebooks, and so on), when the change happened, who made the change, the Knowledge Base version at the time, whether it was added, removed, or changed, and if the component is published or still a draft. Users can filter by specific component types and states, or set a custom date range, making it easy to track and review all updates.

The Audit Portal ensures full traceability and easy navigation through the Knowledge Base history.

Improvements

Rulebook Display Improved

The Rulebook Flow Screen was Improved to make it easier to grasp each Node.

The following changes were applied:

- Added contrast to Heading to distinguish easier between Nodes on low contrast monitors.

- Reduced Node height to fit more Nodes on screen.

- Moved Information about Actions and Preparations to Heading Icons to make it easier to see its status.

- Changed display of Data Quality Dimensions so that its value is recognized more easily.

- Enlarged Node Handles to make it easier to select them.

API

API Server

Some ApiServer Endpoints were extended to not only be called using the name but also by using its id.

- Project:

/api/Project/{nameOrId} - Knowledge Base:

/api/Project/{projectId}/KnowledgeBase/{nameOrId} - Run:

/api/Project/{projectId}/KnowledgeBase/{kbId}/Run/{nameOrId} - Lookup:

/api/Project/{projectId}/KnowledgeBase/{kbId}/Lookup/{nameOrId}/data

Execute Preview Endpoint

Api Surface for starting a Preview was improved.

- Only properties describing the DataSource are now necessary.

- It is now possible to send data directly to the Preview Endpoint to get verified.

Runner

If a Run has no Mapping, a default Mapping will be assumed. This default Mapping will match each Domain to a field of the same name in the data source. A Run will fail if not all Domains are matched. In this case, a new Mapping needs to be created and configured to match the Domains.

In addition, a default Run will be created if a new Knowledge Base is created. This will not change existing Knowledge Bases.

PyheddaIO Runner

The PyheddaIO was rewritten and is now based on an Inter Operational approach with the Dotnet Runner directly in spark Environments. There are now three Options to choose from:

local(default): Fast for smaller datasets which might be executed on the driver node.spark: Fast for larger datasets. Work will be distributed on multiple Workers.- Downside: Gathering of Domain Statistics and execution of DataSet Rules is slow. Having many Lookups is also detrimental as they need to be gathered in every spawned process.

external: In general decent performance.- Downside: Has overhead of starting the external Runner and moving data to and from it.

Bugfixes

- Operators in Conditions and Actions now update in the UI if the Domain changes its datatype.

- Run Execution Scores are now always rounded down to the next lowest percentage value.

- Knowledge Base Dropdowns in dynamic forms now sort Knowledge Bases in ascending order.

New Features

Databricks Project Service Principal Authentication

It is now possible to create a Databricks Connection with the configured Project Service Principal.

Project API Key

Welcome Page List View

Excel Add-In

- Include Row ID (Include a Row ID column. This enables the display of Row Details when clicking on a row.)

- Include Row Validity (Include a color-coded column, that shows the validity of a row.)

- Include Originals (Include columns containing the original values of domains.)

- Include Rulebooks

- Include Business Rules

- Include Variable Domains

- Include Data Type Validity

- Include Member Search Validity

More information can be found in the HEDDA.IO Excel Add-In documentation.

Improvements

- Improved loading of Preview Data.

-

Improved Condition Form to support drag and drop in moving BusinessRules Condition between SubConditions.

Bugfixes

- Fixed some issues, where deleting an object would result in a message that no entries in a Sequence are available.

- Fixed an issue on Analyze Result Screen where an error would be shown on a race condition.

- Using a User as Project Owner who wasn’t used anywhere else in HEDDA.IO before, will not throw an error anymore.

- When adding or editing External Connections, changing to a non-existent Project Service Principal will not show an error toast anymore.

New Features

Current Date Preparation

A new Preparation Option was introduced enabling an easier retrieval of different Dates based on the Current Date. The following Dates will be provided as UTC Dates:

- Now

- Start of today

- End of today

- Start of current month

- End of current month

- Start of current year

- End of current year

Project Service Principals

It is now possible to add an Azure Service Principal for each Project separately. The following Transports have the option to utilize it:

- Microsoft Fabric Lakehouse

- Azure Data Lake Storage

- Azure Blob Storage

- Microsoft SQL Server

- Microsoft OneLake

Microsoft OneLake

Utilizing a Microsoft OneLake Connection together with Microsoft OneLake Shortcuts allows to import files from supported 3rd party vendors into HEDDA.IO . Currently these are AWS, Dataverse and Google Cloud Storage.

Reference Controller – OAuth Details

Improvements

- The Preview Reader Dropdown is hidden if a Reader is configured to always be running.

- Increased performance for some Database queries.

- Added Options to Preview Corrected Filter, where the Filter can be switched quickly to include all Domains or all Domains without Variables.

- Improved Domain Selection in Preview Result.

Bugfixes

- Fixed an error with trailing slashes at the end of a Databricks Connection host name.

- Added missing confirmation on DataLink deletion.

- Fixed an issue that caused items in the navigation list not being highlighted anymore.

- Disallowed empty values for API Expiration Date Dropdown.

- Fixed an issue where some tag changes were not displayed in publish dialog.

- Fixed links in tags detail to runs and rulebooks.

- Removed unlink option for tag detail, when Knowledge Base is not in edit mode.

- Fixed an issue where execution information were loaded multiple times.

- Fixed a possible race condition for external .NET Runner.

- Fixed issue when selecting tag filter in rulebook page.

- Fixed an issue where the Preview count is not correctly calculated if Corrected Filter is selected.

- Fixed an issue in DuckDb Preview Reader where Corrected value is not found if Original was NULL.

New Features

Preview Chaining

Preview Chaining is a new feature, that enables the use of Execution results from a previous Run as the Data Source for a new Run. Preview Chaining lets you choose between the Live and Edit Version of a Knowledge Base as well as filtering for valid or invalid Execution results. It is incorporated into the existing Preview Button as a new Tab called “Execution” and is currently only usable via the HEDDA.IO web interface.

Azure Data Lake Storage

Azure Data Lake Storage has been added as a new Connection Type for ADLS Gen2 enabled Azure Storage Accounts.

Improvements

Resilience Options

External Runner

Added retry policy configuration options to the HEDDA.IO External Runner:

{

"ApiRetryAttempts": 3, //Amount of retry attempts to HEDDA API (Only applies to request timeouts) (Default = 3)

"ApiMaxTimeoutS": 180 //Max wait time before cancelling a request to HEDDA API (Default = 180)

}These options only apply to requests made to the HEDDA.IO API.

Databricks Transport

Miscellaneous

- Added Last Modified Information for Data Links.

- Added better Logging to Api Project.

- Added possibility to add Details to Executions.

- Added UseDetails to .NET Runner Definition, so that additonal information can be stored alongside the execution.

- Added an Option to change the DuckDbPackageSource for System which are behind a Firewall Preview_Reader_ExtensionPath

- Improved DataUpload performance and size limitations.

- Increased performance for some Database queries.

Bugfixes

- Fixed an issue writing back the Data with some unexpected DataTypes.

- Fixed toggle alignment in Knowledge Base Export Drawer.

- Fixed undesired behavior when copying an existing Domain Mapping and changing its values.

- Fixed an issue where it was randomly not possible to upload the Result Parquetfile in .NET Runner.

- Improved error feedback when using the External Connections Test button.

- Fixed Rulebook Search being Case Sensitive.

- Null/Undefined values in the Lookup Preview table will now be displayed as

. - Fixed Execution Statistics not being created in some cases.

- Fixed links to Business Rules from the Knowledge Base Dashboard Overview section.

- Fixed an issue where the Run Page would still display the old Mapping after changing it on a Run.

- Fixed an issue where a Knowledge Base could not be deleted.

- Fixed Lookup Preview table overflow.

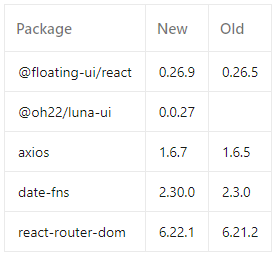

Package Updates

Improvement

- Updated the behavior of apply Domains in Preview Rule Filter to also include Domains from Preparation Formula and Action Values.

New Features

DuckDb In External Runner

We added experimental support for DuckDb as Loader and Exporter in the External Runner.

For loading, this yields better performance (around 25% faster) than the current approach. The Loader can be activated by Setting Hedda:ExperimentalDuckDbLoader Configuration on External Runner Container Config.

For exporting, DuckDb was also implemented as an option. Although the performance is currently worse (3x slower) than the current implementation, there might be potential in the future. It can be activated with the Hedda:ExperimentalDuckDbExporter Config.

DuckDb Preview Reader

DuckDB was introduced as a new option for the Preview Reader configuration. Choosing DuckDB, which is directly executed in the Frontend Service, helps reduce the overall server count, eliminating the need for Trino and Hive installations. With proper configuration, DuckDB can even outperform Trino Reader. To utilize DuckDB, we suggest at least a P2 Plan for your App Service and implementing a mounted Premium Storage to store the Database File.

Configuration

The Configuration of TenantId, ClientId and ClientSecret is optional on Azure Deployments.

Custom Service Principal

Simply select

Custom Service Principal when choosing an Authentication Type for your Connection and enter the required credentials.Lakehouse Connection

Use LUNA.UI as unified UI Framework

Improvement

- Improved performance when publishing a Knowledge Base

- Improved performance when loading Domains

- Improved Transport Schema Logic for Transport where it is costly to load schema directly. Eg. Databricks.

- Further DataType optimizations for optimal performance

Bugfixes

- Fixed a bug where a reference was lost for Data Link Mappings, when a Domain Name changed.

- ContainerTrinoReader now correctly handles a previously unknown container revision state, instead of showing an error

- The Publish Knowledge Base window now indicates if changes to the metadata of the Knowledge Base were made. Metadata includes the name, description, data responsibility office and category of a Knowledge Base.

- Fixed a bug where a user working on a knowledgebase was switched to another knowledgebase which another user published.

- Fixed an issue where the Get Files button would not correctly react to form changes in the External Connection window

Package Updates

Frontend

Improvements

- Improved Logging in .net Runner for better Debugging experience

- Improved Queue Handling in .net Runner to accommodate Stale Queues

Bugfixes

- Fixed an issue with NULL values in Lookup Key Domains

- Fixed an issue in Handling Special Decimals created by native Spark Parquet Uploader

- Fixed an issue with Dates before year 100 in INT96 dateformat

Breaking Changes

Dotnet Runner

The dotnet runner execution is now asynchronous this changes how the library gets called when called directly:

New Features

Business Rule name

Preview Reader Retry Policy

- RetryDelay: Specifies the delay in seconds between attempts of retrieving the current Trino Server status. The default value is 5.

- RetryAttempts: Specifies the maximum amount of retry attempts for retrieiving the current Trino Server status. The default value is 6.

Preview Domain Comparison

Preview Ordering

Improvements

- Reduced the amount of requests to Azure when using the ContainerTrinoReader configuration

- Added the possibility to match Data Links to existing External Connections in a Project, when importing a Knowledge Base

- Changed default for IsReadOnly flag to True for Importing Domains.

- Improved the Performance of the dotnet Runner significantly

- Improved Preview Grid by adding a Header Menu to quickly change Domain Display behavior

- Improved performance of loading the Schema information from Databricks by parallelizing it.

Bugfixes

- Resolved error toast messages appearing after deleting External Connections or Runs

- Fixed an issue with Container Preview Reader when setting very low ShutdownAfterMinutes values

- Fixed an issue with Preview Page showing no loading spinner

- Fixed an issue, where after starting the Preview Reader, “Hive not accessible” would sometimes appear as an error message

- Fixed an issue in Preview where Preparation Domains were not applied correctly

- Fixed an issue in Preview where Last Rule did not checked for Changes in Preparation

- Fixed an issue where removing a Domain Mapping from a filtered list leads to wrong removal

New Features

Direct Links

Case Insensitive Key Mapping

It is now possible to select weather the Key Mapping should be made case sensitive or case insensitive. This allows for more Flexibility in matching with your master Data.

Exclusion of Columns

To mitigate heavy data load and memory pressure of large and wide tables we have introduced the possibility to exclude columns which will not be used in conditions or formulas.

Analyse Preview Data

Extended Domain Filter

The Domain Filter option was extended.

- Numbers

– Numbers can now be prefixed with >, <, >= and <= to filter respectively eg. > 1.2 will filter for numbers greater 1.2. if the prefix is omitted the default operator = will be used.

- Dates

– The format for Dates has now the possibility to consist only of the date portion eg. 2024- 01-01 this will then filter all the rows which have the same date portion. Dates containing the time portion, eg. 2024-01-01T00:00:00, will respect the time portion, so the example would only find dates which actually represent midnight on January 1st, 2024. Microseconds will be ignored.

– Similar to Numbers , dates respect the prefixes >, <, >= and <=. Eg. < 2024-01-01 will filter for dates smaller than January 1st, 2024.

– The token $NOW was introduced to be able to filter for dates in comparison to the current timestamp.Eg. >= $NOW.

– The token $TODAY was introduced to be able to filter for dates in comparison to the current date. Eg.<= $TODAY.

- The NULL value has changed from <NULL> to $NULL.

Bugfixes

- Fixed Import Knowledge Base from Databricks were Precision, Scale and Length were not correctly set.

- Fixed an Issue in Databricks All Purpose Api where wide tables did not return all Data.

- Fixed an Issue with Precision Validation in Dotnet Runner on some cases.

- Fixed an Issue in Forms where an empty description could be entered in RichText Component.

- Minor UI Fixes.

Features

Member Select in Business Rule

We introduced a new feature where you can select a Value from the Member List, analog to referencing the Value of another Domain or Lookup. To achieve this, you require a Domain with Member Search enabled being on the Domain Selection of the Condition. In the Value Field you can now enter the @ Symbol as you would do to reference another Domain or Lookup. A new Tab “Member” should be available. Selecting it will load the List of Parent Members from which you can choose. Note: Other than a reference to a Lookup or Domain, this will not insert a reference to the Member Value but enter the selected value directly, meaning there is no connection or information left that this value was chosen from the Member List.

Formulas/Preparation

We added a new possibility to manipulate processed data which helps achieving new possibilities in the Business Rule processing. Initially started as Formulas, which should help utilize better data e.g. fixed casing or trimmed strings to be used in Conditions, it soon transformed into a new Step in the Processing of a Business Rule, the “Preparation”. This approach allows to clean the data before being utilized in a Condition, it can also be used to have something like “calculated” Domains which can be directly utilized on the left and right hand side of a condition, which also includes setting those Domains from Data Links, etc. So values from Data Links on the Left side of a condition are now possible too.

The Following Formula Items are included:

For Strings

- String: Provides a String value either directly or from a reference like Lookup or Domain

- Concat: Concatenates multiple Strings together, an optional Separator can be entered

- Lower: Transforms to Lower Case

- Upper: Transforms to Upper Case

- Regex Replace: Performs a regex Transformation, Capture Groups can be accessed with $1, $2, … in Replace Field

- Replace: A Regular String Replace

- SubString: Gets a part of a String from a zero based Start value and an optional Length

- Trim: Removes white spaces from String. Either on both sides or only from front or end

- String from Date: Creates a String from a Date with a specified Format

- String from Number: Creates a String from a Number with a specified Format

- String from Boolean: Creates a String from a Boolean where the representing Bool Values can be entered

For Numbers

- Number: Provides a Number value either directly or from a reference like Lookup or Domain

- Addition: Adds multiple Numbers

- Multiplication: Multiplies multiple Numbers

- Subtraction: Subtracts multiple Numbers starting from top to bottom

- Division: Divides multiple Numbers starting from top to bottom

- Round: Rounds the Number to desired decimal count. Rounding can be either up, down or commercial

- Number from String: Transforms a Number from a String with possibility to provide thousand and decimal separators

- Number from Date: Takes the Seconds from Unix Epoch

For Dates

- Date: Provides a Date value either directly or from a reference like Lookup or Domain

- Add Time: Adds Time to a Date

- Date from Number: Creates a date from a Number based on Unix Epoch seconds

- Date from String: Parses a date from a String based on provided Format

For Boolean

- Boolean: Provides a Boolean value either directly or from a reference like Lookup or Domain

- Boolean from String: Creates a Boolean from a string where the True and False Value can be Provided

- Boolean from Number: Creates a Boolean from a Number(0or1), otherwise null

‘AlwaysTrue’ logical Condition

An ‘Always True’ logical Condition is now available. This new Condition will always be met successfully regardless of nested Conditions and can be used to e.g. skip the evaluation to immediately proceed with the Action of a Business Rule. Conditions within the ‘Always True’ Condition remain editable.

Databricks SQL Warehouse API Support

Added Databricks SQL Warehouse support to HEDDA.IO which has performance improvements over the Databricks Command API and should drastically reduce load times for lookup and preview operations which rely on Databricks.

Miscellaneous

- Improved performance when loading statistics

- Improved Value Field to not show Lookup Tab if no Lookups are present

- Added multiple receivers to Twilio alert sink

- Removed profiling from python example code temporarily

- The Data Type Validation option will now be selected by default in the Runs Execution Widget under the Domains tab

- Improved DotnetRunner Performance

- Extended Business Rule Node in Rulebook Overview. Condition will now be partially shown directly in the node and can be viewed in full on click without leaving the page.

- Hardened publishing to exclude generated items through the API within valid references

- Improved default templates for events

- Drastically improved load times when initially loading a Project in the UI

- Added Collapsible Sidebar

- Added Live / Edit Switch to Global Search

- Added Search to Mapping Detail and Form

Features

Variable Domains

Domains can now be marked as Variables. Variable Domains can still be used in BusinessRules and Actions but will not appear in Mappings as they are not read from the Source Data. This means that they no longer need to be present in eg. the source data frame. On top of this Variable Domains can also have a Default Value configured. On Preview Screen, Variable Domains are hidden by default but can be made visible through the Domain Selection.

Last Applied Changes in Preview

The Preview Row Result View will now display the Last Rule which made a change to a specific Domain. Every Domain which has changed during the execution will display an Info Icon on hover. Clicking on it will display the Last Rule and Rulebook.

Databricks Transport

Support for Databricks as a Transport has been implemented. It is now possible to utilize Databricks as Lookup or External Member Source. Furthermore Databricks can be used to create Knowledge Bases. This Transport uses the General Cluster, a version with SQL Warehouse Cluster support is also planned for the future.

Support Page

The Support Page now gives you the possibility to submit an email ticket to our customer support. Enter a subject and click the corresponding button to open your email client.